The tech giant recently released an update that will add up to Alexa’s current skills: The new Live Translation for Amazon Alexa.

Alexa can now translate between Brazilian Portuguese, Italian, Hindi, French, German, Spanish, and English in real-time. What a great innovation!

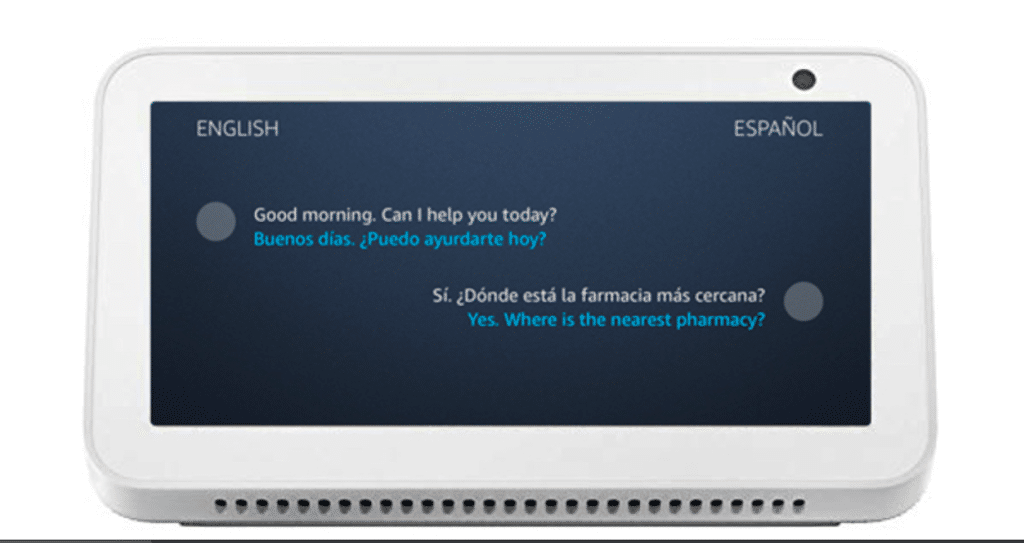

The translation update helps users ask Alexa to translate a language, and a display of the transcript shows up on Echo devices as well.

Additionally, Alexa can now identify natural pauses and sentence breaks in conversation more accurately.

Translation has never been easier. This voice technology will be a total game-changer for small businesses for years to come.

You can consider expanding your reach to different markets.

Your customers can now easily request information without having to worry about the language interference.

Let’s look at how live translation for Amazon Alexa works.

Live Translation for Amazon Alexa

This live translation allows individuals speaking in two different languages to talk with each other.

Alexa acts as an interpreter and translating both sides of the conversation.

To start with, the feature will work with six pairs of languages — English and Spanish, French, German, Italian, Brazilian Portuguese, or Hindi — on Echo devices with locale set to English US.

Customers can ask Alexa to initiate a translation session for a pair of languages.

Once the session has started off, customers can speak phrases or sentences in either language. Alexa automatically identifies a language and translates each side of the conversation.

The Live Translation feature leverages several existing Amazon systems, including Alexa’s automatic-speech-recognition (ASR) system, Amazon Translate, and Alexa’s text-to-speech system.

Language ID

Alexa runs two ASR models in parallel, along with a separate model for language identification during a translation session. Whatever language you speak goes through both ASR models at once.

However, only one ASR model’s output is sent to the translation engine.

To keep the latency of the translation request acceptable, this parallel implementation is necessary as waiting to begin speech recognition until the language ID model has returned a result would delay the playback of the translated audio.

The associated ASR output is post-processed and sent to Amazon Translate once the language ID system has selected a language.

The translation is passed to Alexa’s text-to-speech system for playback.

Furthermore, Amazon found that the language ID model works best when it bases its decision on both acoustic information about the speech signal and the outputs of both ASR models.

In the cases of non-native speakers of a language, whose speech often has consistent acoustic properties regardless of the language being spoken, the ASR data is of big help.

Speech Recognition

The acoustic model converts audio into the smallest unit of speech called phonemes.

The language model encodes the probabilities of particular strings of words, which helps the ASR system decide between alternative translations or interpretations of the same sequence of phonemes.

Like most existing ASR systems, the ones used for Alexa live translation include an acoustic model and a language model as well.

ASR systems used for Live Translation, like Alexa’s existing ASR models has two types of language models namely traditional language model and neural language model.

A traditional language model, encodes probabilities for relatively short strings of words (typically around four), and a neural language model, which can account for longer-range dependencies.

The Live Translation language models were trained to handle more-conversational speech covering a wider range of topics than Alexa’s existing ASR models.

Amazon used connectionist temporal classification (CTC) to train the acoustic models.

To make the acoustic model more powerful, the company also mixed noise into the training set, enabling the model to focus on characteristics of the input signal that vary less under different acoustic conditions.

Detail Work

There is a modification of Alexa’s end-pointer.

This indicates that the customer has stopped speaking and that Alexa needs to follow up. This is also required in adapting to conversational speech.

The end-pointer can distinguish between a pause at the end of a sentence and a mid-sentence pause, which means the speaker may go on a little longer.

For Live Translation, the end-pointer is modified to tolerate longer pauses at the ends of each sentence, as speakers engaged in long conversations will often take time between sentences to formulate their thoughts.

Lastly, the Live Translation system adjusts for common disfluencies. It also helps in formatting the ASR output.

Amazon Translate’s neural-machine-translation system was designed to work with textual input.

Currently, Amazon is exploring several approaches to further improve the Live Translation feature.

One of these is semi-supervised learning, in which Alexa’s existing models annotate unlabeled data.

Amazon uses the highest-confidence outputs as additional training examples for Amazon’s translation-specific ASR and language ID models.

Amazon is also working to adapt the neural-machine-translation engine to conversational-speech data.

It generates translations that incorporate relevant context such as tone of voice or formal versus informal translations.

This is done to improve the fluency of the translation and its robustness to spoken-language input.

Amazon seeks out ways and is continuously working on improving the quality of the overall translations and of colloquial and idiomatic expressions in particular.

Using Live Translation

The tech behind Live Translate sounds impressive, and you can read more about it in Amazon’s announcement post.

Live Translate is an AI-powered feature that can recognize multiple languages as they’re spoken, and translate for both speakers.

It is also similar to the translation functions available from other digital assistants

Live Translate is easy to use, but there are some restrictions to keep in mind.

Please take note that the feature is only available on Echo devices in the U.S. All they need to do is to set their Echo device’s location and system language to “English U.S.” to use Live Translate.

Users can easily change both in the Alexa mobile app if needed, but this is not in all countries, and doing so changes Alexa’s speaking accent, along with the time, weather, and local alerts you receive.

Live Translate also works on all Echo devices.

It is just that Echo Show users get an extra feature: the Echo Show’s screen display.

It is a live translation for both speakers in each language so you can read back through the most recently-spoken sentences.

Echo speakers are currently the only Alexa devices that support Live Translate.

Steps to Use Live Translation for Amazon Alexa on an Echo Device

These steps are so quick and you can have your translation easily. All you need to do is the following:

1. When you’re ready to use Live Translate, say: “Alexa, please translate English to [second language].” Other variations of the phrase should work; just make sure you tell Alexa which languages to translate.

2. Keep your conversation as normal.. Alexa will listen in and translate as needed. For your Echo speaker to clearly hear and interpret both speakers, you need to stay within close proximity.

3. When the conversation is over, say “Alexa, Stop” to turn off Live Translation.

Final Thoughts

Live Translation for Amazon Alexa is a new feature that allows the user to understand other languages easily.

Whether you are traveling to a remote country or catching up with foreign relatives translating one language to another has never been so easy!

You just simply ask Alexa to initiate a translation session for two languages — English and Spanish, for example— then you are good to go.

You may start chatting, say a phrase in either dialect, and Alexa will automatically identify the language and translate your words.

This program currently works with six pairs of languages — English and Spanish, French, German, Italian, Brazilian Portuguese, or Hindi — and requires an Echo device with the locale set to English US.

Hopefully, Live Translate will bring to light on other Alexa-powered devices and in other countries as well in the future, but for now, it could make visiting with international guests easier.